Imatest LLC

Abstract

In this paper we review the factors, beyond the megapixel count, that make up a camera’s overall image quality and compare the emerging Compact System Camera format to other formats, such as DSLR and point and shoot formats, on the basis of these image quality factors.

Introduction

This paper reviews the image quality factors (IQFs) that make up a camera’s overall image quality and compares the emerging Compact System Camera (CSC) format with DSLR and point-and-shoot formats on the basis of these IQFs. The paper also reviews key components of camera systems that influence IQFs and compares typical components in the emerging CSC format with typical components in the Digital Single Lens Reflex (DSLR) format.

Megapixel Count

Megapixel count has been successfully marketed as the single most important number in defining how good a camera is; most consumers now believe the megapixel count can be used as a measure of the overall image quality of a camera.

Unfortunately, the number of megapixels in a camera cannot be translated into a valid metric of image quality because the image quality of a camera system (either perceived by humans or processed in machine-vision applications) is inherently a combination of multiple IQFs. IQFs are determined by the interaction of multiple underlying components, not only the megapixel count of the sensor. Examples of image quality factors include lens sharpness, color accuracy, noise, and dynamic range, as well as perceptually relevant factors, such as print size and viewing distance. The following section describes key components that influence IQFs and highlights the principal differences between CSCs and DSLRs.

Compact System Cameras

CSCs are a relatively new format in the consumer camera market that has emerged since 2008. CSCs generally have the following properties:

- unlike DSLRs, they do not have an optical through-the-lens (TTL) viewfinder, but rather employ an electronic viewfinder

- unlike DSLRs, there is no flip mirror or pentaprism

- like DSLR cameras, they have interchangeable lenses

- large sensors compared to point-and-shoot cameras, although smaller than full-frame DSLRs

Electronic Viewfinder

Perhaps the most significant difference between CSCs and DSLRs is the TTL viewfinder. SLR film cameras require an optical subsystem for the viewfinder consisting of a flip mirror, a ground glass screen, a pentaprism and an eye piece. By using an electronic viewfinder instead of an optical TTL viewfinder, camera bodies can be made much smaller, lighter, at a lower-cost and with fewer moving parts.

The presence of a flip mirror places a constraint on the minimum size of the flange focal length (FFL), which is the distance from the flange on the lens housing to the image plane. In a DSLR, the lens needs to be sufficiently far from the sensor to allow room for the flip mirror. As general rule of thumb, the closer to the sensor a lens is the smaller the lens will be. Thus, by removing the flip mirror, CSCs can be smaller than DSLRs for two separate but related reasons: the lack of the TTL optical sub-system eliminates optics, and the lack of the flip mirror enables smaller lenses because the lenses can be closer to the sensor.

Removing the flip mirror not only simplifies the camera system, making the camera both lighter and smaller, but also removes the vibrations caused by flipping the mirror. These vibrations are known as “mirror slap” and can reduce the sharpness of an image. Typically, mirror slap has the biggest impact for exposure times between 1/30 and 1 second and, so, mirror slap primarily impacts images captured using a tripod. Many DSLRs can lock the mirror out of the way, which removes the mirror slap vibrations at the cost of disabling the TTL viewfinder.

While the vibrations of DSLR mirror-slap typically affect tripod-captured images, CSC cameras may experience a higher level of image-degrading camera shake when capturing hand-held images because they are lighter and hence more affected by hand motion. The effects of camera-shake are exacerbated for both formats when using the electronic LCD viewfinder with the camera held at arm’s length. Since CSC cameras are often held at arm’s length, DSLRs have an advantage in this regard, but the advantage is minimal in practice because image stabilization, available in most CSC lenses, is effective at the shutter speeds used for hand-held photography.

DLSR cameras typically use phase-detection-based autofocus. This method is accomplished by an optical subsystem embedded in the flip mirror that measures the phase from two or more points in the exit pupil (the image of the aperture stop) of the lens. In contrast, CSC cameras typically use contrast-measured autofocus, which is a metric computed by analyzing the sensor’s pixel data. In general, the contrast-measured autofocus is comparable in speed and accuracy to the phase-detection-based autofocus algorithms used in DSLRs.

Sensor Size

CSC sensors have a considerably larger area (≈9 times) than compact point-and-shoot cameras. The majority of the lenses on the CSC market are in the Micro Four Thirds (17.3mm x 13.0mm, 4:3 aspect ratio) and APS-C (23.6mm x 15.7mm, 3:2 aspect ratio) formats. Compared to a full frame 35mm DSLR sensor, the Micro Four Thirds sensor has roughly 26% of the area and the APS-C sensor has roughly 43%. The focal length multipliers (to effective 35mm focal lengths) for Micro Four Thirds and APS-C are 2.0 and 1.53, respectively. The reduced sensor size of the CSC sensor has both benefits and drawbacks when compared to DSLRs:

- The primary benefit being that the size of a lens will, in general, be substantially smaller and the lens will be lighter.

- When compared to point and shoot cameras, which often have 1/2.5” sensors, the Micro Four Thirds format has a signal-to-noise ratio (SNR) that is 3 times higher, and the APS-C format has SNR that is 3.85 times larger.

- The primary drawback is that, compared to DSLRs, CSCs will experience an increase in the image noise due to the smaller image (assuming the f/# and megapixel count are equal). In general, the full frame DSLR will have a SNR that is 2 times larger than a Micro Four Thirds format and a 1.53 times the APS-C format.

Interchangeable Lenses

Like DSLRs, CSCs have interchangeable lenses. In practical terms, this is somewhat of a required feature for the CSC format, since the sensors are much larger than point-and-shoot cameras. Interchangeable lenses are required because lens designs must trade off features, such as low f/# for larger image size (the space-bandwidth product) or zoom focal lengths. The larger sensors of CSC lens make it too challenging to create low f/# with large zoom ranges. Thus, the only way to cover the entire range of focal lengths from ultra-wide angle to super telephoto is to split the range up into many different lenses, each with a smaller zoom range (compared to point and shoot).

When compared to DSLRs, this is again a partial advantage for CSCs because for the same f/#, it is possible to have a larger zoom range with equivalent MTF.

Other Considerations

One important aspect of a camera is how quickly the image is captured after triggering the shutter. The delay is referred to as the camera’s shutter lag, and a small shutter lag can be the difference between an outstanding photograph and an ordinary photograph, especially in action/sports photography and even portraiture as facial expressions are fleeting. In terms of shutter lag CSC is currently at a slight disadvantage compared to DSLR. DSLRs are also generally a bit faster in terms of how quickly shots can be taken in succession. Generally speaking however the CSC format is much closer to the DSLR format with regard to speed metrics (shutter lag, frames per second and shot-to-shot time) than to point and shoot cameras, and one can assume that over time the CSC format will closely approach DSLRs in this regard.

Image Quality Factors

This section identifies the principal IQFs that influence overall image quality and relates each IQF to underlying camera components, highlighting differences between DSLRs and CSCs.

Sharpness

The sharpness of a camera refers to the amount of detail the camera can reproduce in an image, and is, arguably, the most important IQF. As a system, a camera’s sharpness is determined by the the sharpness of the lens, the coupling to the sensor, and the image processing algorithms applied to the image.

Lens Sharpness

A camera lens, which is itself a system composed of many different optical elements, acts as a signal-processing filter. In the same way that a microphone can detect sound waves and filter temporal frequencies, a lens forms a filtered image of an object.

The sharpness of a lens is typically quantified by its filter representation in the spatial-frequency domain, known as the modulation transfer function (MTF). MTF is the ratio of the modulation in the image to the modulation of the object as a function of spatial frequency. The impact of the MTF is to attenuate the spatial frequencies of the object being imaged, effectively blurring the image, as can be seen in figure 1. Figure 1 shows a modulation pattern that varies from low to high spatial frequencies. The upper portion of figure 1 shows the original and unaltered object, and the lower portion shows the image of the object as filtered by the lens MTF. The effect is that the MTF reduces the sine pattern’s contrast at high spatial frequencies.

![]()

Figure 1: The modulation pattern has increasing spatial frequency from left to right.

Bottom: the image of the above sine pattern as filtered by a lens’s MTF shows a reduction in contrast with high spatial frequencies.

A closely related concept to the MTF is the point spread function (PSF). The PSF, another metric of a lens’s sharpness, is the image of a point source of light that has been blurred by the MTF of the lens. The image formation process can be equivalently conceptualized in the following two ways: a lens is a filter in the spatial frequency domain represented by the MTF, or a lens is a mapping from points in the object to distributed (blurred) points in the image represented by the PSF.

Diffraction Limit

The sharpness of a lens has an intrinsic upper-limit set by the laws of physics. When this best-case scenario is achieved, the lens is said to be “diffraction-limited”, meaning that the lens is free from all aberrations (deformations of the optical wavefront that reduce sharpness), and the only limiting factor to the sharpness is diffraction from the aperture stop. In a diffraction-limited lens, there is no modulation above the optical bandwidth of the lens; a lens is a filter and the lens f/# is inversely proportional to the bandwidth of the filter. The word “bandwidth” is taken here to mean the range of spatial-frequencies over which a lens can form an image.

Aberrations

Aberrations occur in a lens when the electromagnetic wave emerging from the lens deviates from a perfect (although clipped by the aperture stop) spherical wave. Aberrations reduce the MTF below the diffraction limit and equivalently reduce the bandwidth of the lens. The goal of lens design is often to find a configuration of lens elements (thicknesses, materials and surface shapes and curvatures) that minimizes and balances the aberrations in the lens subject to constraints, both optical (such as focal length and f/#) and mechanical (size constraints).

Common aberrations include spherical aberration (a variation of focus with aperture coordinate), field curvature (a variation of focus with image coordinate), astigmatism (a variation of focus between different directions of modulation for off-axis image coordinates), distortion (a variation of magnification with image coordinate), axial chromatic aberration (a variation of focus with wavelength) and lateral chromatic aberration (a variation of magnification with wavelength).

Space-Bandwidth Product

An important concept in image science is the space-bandwidth product (SBP). The SBP is a measure of the information capacity of a camera. It is simply the product of the size of the image and the bandwidth of the optic. The SBP is a measure of how complicated a lens design needs to be to attain low-aberration performance. A higher SBP typically implies that a more complex lens design is required to meet the same level of MTF as that of a lens with a lower SBP.

Generally speaking, Compact System Cameras benefit from the SBP product because of its smaller image size and, correspondingly, shorter focal lengths. Shrinking the size of the image reduces the amount of information in an image at a given f/#. In simpler terms, reducing the size of the image makes it easier for an optical design to achieve low aberrations (or, alternatively, higher MTF). What this implies is that when adapting a lens designed for a DSLR format by simply scaling the size of the lens to a CSC format, the MTF will necessarily increase closer to the diffraction limit. It is easier to create a lens design with high bandwidth for the CSC format, than it is for the DSLR format. Alternatively, this also means that a CSC format lens can achieve the same MTF at a given f/# with a simpler optical design than a DSLR.

The reduced image size for CSCs implies the following:

- that for the same f/#, a CSC lens can attain the same level of MTF as a DSLR with a less complicated lens design,

- that for the same level of lens design complexity, the CSC lens can attain a lower f/# or higher MTF.

These points are generally true, but not always attainable in the real world. While reducing the size of a lens by simply scaling the design reduces the aberrations in the lens (which, in turn, improves the MTF), the manufacturing tolerances required to achieve the reduced aberrations, correspondingly, get tighter. Further, this assumes that there are aberrations to reduce since an already diffraction limited lens can’t be improved.

An important distinction between CSC lenses and DSLR lenses is that, often, the high-end lenses for DSLRs have been designed to meet the SBP constraints of the full 35mm film format, but these lenses are often used on smaller APS-C sized sensors. Much of the SBP is wasted on the smaller-sensor DSLRs when this occurs. By comparison, the lenses developed for the CSC format are typically designed to work with a single size sensor that fully utilizes the SBP.

Sensor Sharpness

The sensor acts as filter in much the same way as the MTF of the lens. One significant difference, however, is the maximum frequency (bandwidth) a sensor can correctly respond to. This frequency, known as the Nyquist frequency (fnyq), is 1/2 the sampling frequency of the sensor. This maximum frequency is achieved when every other pixel is illuminated. When the bandwidth of the lens MTF is greater than the bandwidth of the sensor, the high spatial frequencies above fnyqin the lens MTF will be folded back by the sensor into lower spatial frequencies below fnyq. This phenomena is known as aliasing, and it is responsible for moiré artifacts.

Essentially, this implies that the smaller the pixels are, the higher sensor Nyquist frequency. In this regard, there is little to compare between DSLRs and CSCs — the image quality of both formats will suffer when the Nyquist frequency of the sensor is not well matched to the bandwidth of the lens.

Image Processing

Image processing algorithms, such as Bayer pattern demosaicing, sharpening filters, and gamma correction, all substantially affect the system MTF. In principle, their effect on the system MTF is equivalent for CSC and DSLR systems, as the differences in their image processing pipelines have little to do with the format of the camera and depend more on the proprietary algorithms used.

Perceived Sharpness

The sharpness of an image produced from a camera depends on the individual capabilities of its components (lens, sensor and image processing), but the most important contributor to the overall sharpness of an image is how the human visual system perceives the sharpness. In addition to the combination of the lens, sensor and image processing the sharpness ultimately depends on the viewing conditions, such as the print size, viewing distance, lighting and display/print characteristics.

The megapixel count has been marketed as a single number that determines how large an image from a camera can be printed with “photo-quality”. “Photo-quality” refers to the number of dots in the print per inch (DPI) and is typically assumed to be approximately 300. Using the megapixel count as the sole factor for determining the maximum print size is misleading: what really matters is how the camera works as a system (the system MTF) and the minimum viewing distance to the image.

The human visual system (HVS) can be modeled as a filter, similar to the MTF of a lens. For the HVS, the question of whether fine detail can be resolved, depends on the spatial scale of the detail (the spatial frequencies), as well as the distance to the image, meaning that the HVS can resolve fine details based on their angular subtense. Correspondingly, the HVS can be modeled as a filter, known as the contrast sensitivity function or CSF, in the angular-frequency domain. Numerous experiments dating back to the 1970s have been performed to measure CSF.

The subjective quality factor (SQF) is an image quality factor that accounts for the perceived sharpness of an image printed at a given size and viewed at a given distance. The SQF is a measurement of how well matched the system MTF of a camera is to the CSF of the HVS. This allows for the ranking of cameras on the basis of how sharpness is perceived by the HVS at a given distance and print size.

In practice, the differences in perceptual sharpness between CSC and DSLRs have more to do with the optics and sensor taken as a system, rather than megapixel count owing to the already sufficiently large number of megapixels. This point is especially true for images viewed on computer screens or even large 16” by 24” prints. In terms of megapixel count alone there is little difference between CSC and DSLRs with respect to perceptual sharpness.

Noise

Noise is characterized by a random variation in the image density. The most fundamental kind of noise is Poisson noise, which arises due to the random quantum nature of the photon statistics. Noise is most significant in very low-light and short-exposure images although it is present in all images. The amount of noise in a given image is determined by many different factors such as exposure time, source luminance, f/#, lens vignetting, sensor quantum efficiency, pixel structure and pixel size. Amongst the different factors that impact noise the size of the pixel is the biggest differentiator between CSC and DSLR formats. As pixel sizes decrease the number of photons detected at each pixel decreases (the number of photons detected is proportional to the area of the pixel) and as a result the noise variations increase. Since CSC cameras generally have smaller pixels than DSLRs (due to their smaller sensor sizes) CSC cameras will have more noise, especially so in low-light photography.

Dynamic Range

The dynamic range of a sensor is the range of light levels a camera can measure within a certain noise-quality level. Dynamic range is typically quantified in powers of 2, such as EVs or f-stops. Dynamic range is an IQF that can be improved by taking more or different image data, including taking longer exposures with a tripod, lowering the cameras ISO speed or by merging multiple exposures into a high dynamic range image. Because the dynamic range depends on the noise statistics, sensors with smaller pixel sizes such as CSCs tend to have more noise and hence less dynamic range then the larger-pixel DSLRs.

Tone Reproduction and Contrast

Closely related to dynamic range is tone reproduction. Tone reproduction is the relationship between illumination and pixel level. Typically the tone reproduction curve is quantified by plotting the log(pixel level/255) versus log(exposure). The slope of the tone response curve is the γ (gamma) or contrast of the camera. When γ is too high the image will have too much contrast and there can be a loss of dynamic range in the highlights and shadows due to clipping. When γ is too small the image can look washed out and dull. Tonal response curves are applied to an image to make the image perceptually more pleasing: a high γ in the mid-tones that rolls off in the highlights prevents burnout and makes the camera response more film-like. Generally there is little to compare between DSLRs and CSCs with this IQF. That said, tone reproduction is one of the most important IQFs due since the human visual system can keenly evaluate the reproduction of tone.

Vignetting

Lens vignetting is colloquially used as a catch all for anything that reduces the illumination in the image radially about the image center. Vignetting can be caused by:

- the clipping of rays of light as they pass through an optic, which is the true definition of vignetting

- variations in the f/# with image coordinate

- poor coupling of light rays into the pixel structure

- the ubiquitous cos 4 ( θ ) rule that says that the illumination at an off-axis image point is equal to the illumination at the center of the image times cos 4 ( θ ) where θ is the angle between the two image points whose vertex is at the exit pupil (the image of the aperture stop).

There is little difference between CSCs and DSLRs with respect to the first three vignetting examples above but the cos 4 ( θ ) law does have has a greater impact on shorter optical track length lenses like CSC than longer track length DSLRs for the sole reason that the exit pupil will in general be farther from the sensor. The result is that the cos 4 ( θ ) law implies that CSC lenses will in general reduce illumination in the corners of the sensor more readily than DLSR lenses.

Color Accuracy

Color accuracy is an IQF that is important but not necessarily desired. Most people prefer images whose color is more saturated than the original object.

The color accuracy can be quantified in many different ways but they are all derived from the measurement of color shifts and white balance accuracies. The differences are a function of the color space used to quantify the errors. The acceptable threshold of the errors is normally dictated by the user’s ultimate use of the image for viewing or printing.

Conclusions

Todays DSLRs have evolved into their current format by maintaining many of the characteristics of the SLR format while adapting them to digital imagery. The CSC format is more of a revolution than an evolution of the SLR format, casting away many of the optical and size constraints of the SLR. The differences between CSC and DSLR impact many different factors that ultimately combine together in determining the overall image quality.

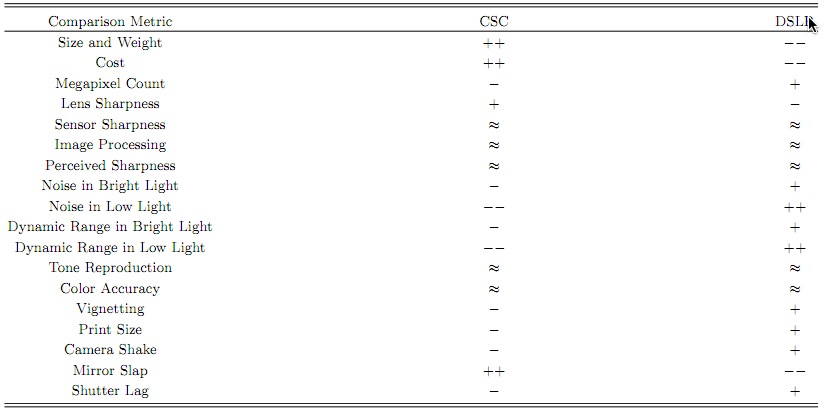

Comparison table between the CSC and DSLR formats.

The notation is that ++ or — represent a clear advantage/disadvantage, + or – represent a minor advantage/disadvantage

and ≈ implies that the formats are roughly similar.

Low light and bright light conditions imply capturing images with ISO speeds 800 or 800 respectively.

This table summarizes the general comparisons that can made about the differences between CSC and DSLR formats. These comparisons are subjective in that they derive from interpretations of the how the differences in the camera formats impact different image quality factors. These comparisons are not made on the basis of a data-driven comparison between specific camera models and lenses.

The only significant differences in the table result directly from the two primary format differences, namely the smaller sensor and the removal of the TTL system. In general the reduced camera size of the CSC can lead to an increase in optical performance, and ultimately increase sharpness, both by better taking advantage of the SBP product as well as by raising the MTF closer to the diffraction limit as a result of shortening the focal length. This increase in performance may even be traded off in other ways, such as in fabrication tolerances or reduced optical design complexity. The reduced sensor size of CSCs in general increases the noise owing to the smaller pixels, which can be an issue when photographing in low-light situations.

August, 2011

Read this story and all the best stories on The Luminous Landscape

The author has made this story available to Luminous Landscape members only. Upgrade to get instant access to this story and other benefits available only to members.

Why choose us?

Luminous-Landscape is a membership site. Our website contains over 5300 articles on almost every topic, camera, lens and printer you can imagine. Our membership model is simple, just $2 a month ($24.00 USD a year). This $24 gains you access to a wealth of information including all our past and future video tutorials on such topics as Lightroom, Capture One, Printing, file management and dozens of interviews and travel videos.

- New Articles every few days

- All original content found nowhere else on the web

- No Pop Up Google Sense ads – Our advertisers are photo related

- Download/stream video to any device

- NEW videos monthly

- Top well-known photographer contributors

- Posts from industry leaders

- Speciality Photography Workshops

- Mobile device scalable

- Exclusive video interviews

- Special vendor offers for members

- Hands On Product reviews

- FREE – User Forum. One of the most read user forums on the internet

- Access to our community Buy and Sell pages; for members only.