Do Sensors “Outresolve” Lenses?

by Rubén Osuna and Efraín García

We read everywhere that new high resolution sensors put pressure on actual lenses. These comments arise copiously each time a new sensor with higher pixel counts appears. It happened with the 22 millions of pixels of the Canon 1Ds Mark III, and it will happen again when the 25 MP sensor by Sony comes to life into a new camera. Are this kind of comments accurate? There is no short answer to the question, because the subject is complex. However, we will try to summarize several basic rules and results, closing a previous discussion at The Luminous Landscape.

Lens resolution basics

To begin with, a bit of terminological precision will be handy. The finesse of detail in a photograph is resolution. This resolved detail has a particular degree of visibility, depending on contrast. Resolution and contrast determine image clarity. On the other hand, sharpness is determined by edge definition in the resolved detail, and it is determined by edge contrast. Resolving power is an objective measure of resolution (in resolved line pairs or cycles per millimeter units), and acutance is a measure of sharpness (calculated by tracing a gradient curve). Resolving power and acutance aren’t good measures of image quality if we take them separately[1].

The modulation transfer functions (MTF) are a far more complex objective measure of image quality, and it combines resolution and contrast. The modulation transfer functions are mathematical expressions of the signal transmitted by a lens. These functions were first used in electrical engineering, and the basic terminology was later adopted in the photography domain. The signal has two properties: frequency (spatial or temporal) and amplitude. Frequency is the number of repetitions of a signal in a linear space or period of time. Amplitude refers to the difference between the minimum level and the maximum level of a signal. In photographic signals, (spatial) frequency is resolution and amplitude is contrast. The more pair of lines (one black, one white) we have into a spatial unit, the higher is the signal frequency or resolution. We can think on contrast as the brightness difference between adjacent areas.

Lens resolution is limited by diffraction, when you close the diaphragm, and by aberrations, which worsen with focal length and the opening of the diaphragm.

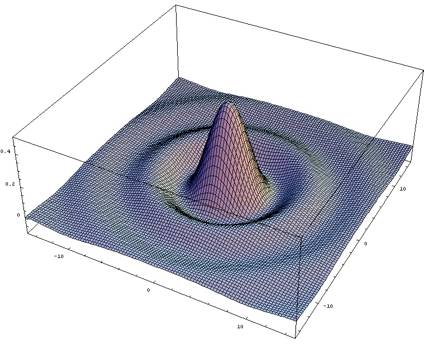

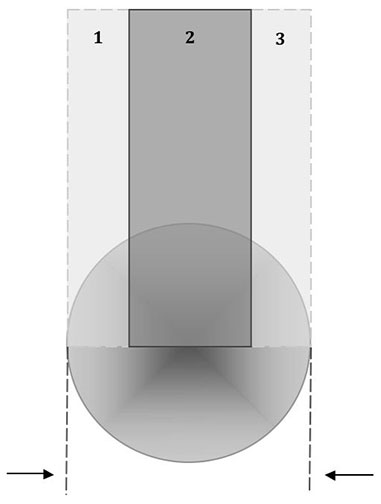

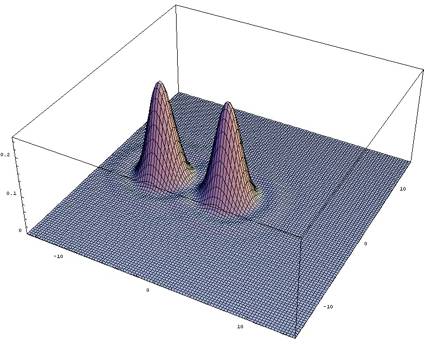

Light is like a flux, and closing the diaphragm blades produces a dispersion effect similar to that of the water squirting out of a pipe throughout a narrow hole with great pressure. This is diffraction, and it has a degrading effect on resolution and contrast and cannot be avoided. The light spots projected on the focal plane by the lens have a particular shape: a bright central spot (the Airy disk) surrounded by concentric rings alternatively dark and bright (Airy pattern). The Airy disk is brighter at the center, and the intensity of the light decreases as we go to the borders (see Figure 1). The wider the aperture, the smaller the Airy disks.

Figure 1 . 3D representation of the Airy pattern, where the height represents the light intensity.

Aberrations also have a same negative impact on resolution and contrast, but lens designers try to reduce it. Their success determines how much resolution and contrast is preserved when the diaphragm is opened, because several aberrations grow with the aperture (spherical, coma, axial chromatic, astigmatism, field curvature), becoming very hard to control in high-speed lenses[2]. Every aberration distorts the Airy disks patterns and shapes in a characteristic way (see Natalie Gakopoulos’ simulations).

When we see a black human hair we see it opposed to a brighter area. The stronger the difference in brightness, the clearer the hair will be perceived. Lenses cannot keep all the detail at full contrast (as present in the subject). Transmitted contrast is the degree to which black lines remain black, and white lines remain white. When contrast drops, pure black and white lines became grey, and differences between lines vanish. We can measure contrast as a percentage. Any value below 100% implies a loss, and lenses only can transfer coarse detail at the maximum level of “fidelity”. The finer the detail is, the larger are the contrast losses, due to aberrations and diffraction. Below some minimum level of contrast fine detail is not discernible at all.

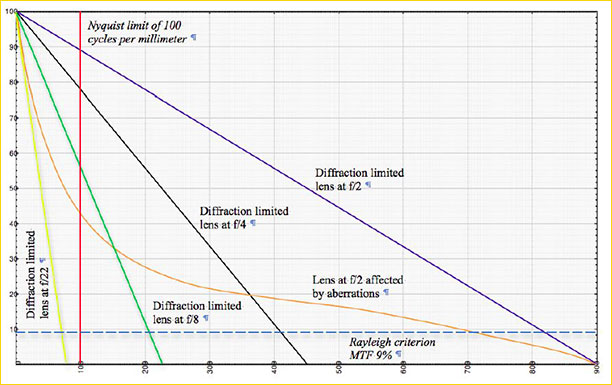

There are two widely extended ways of representing modulation transfer functions, and both are informative. The first one is by plotting contrast in the vertical axis (in percentage terms) and resolution in the horizontal axis (in line pairs per millimeter, lp/mm), for a particular point in the image and wavelength of the light. Then, we can trace a curve for each aperture of the lens, and analyze how the shape of the curves changes (see Figure 2).

Figure 2. Graphic representation of the MTFs of two hypothetical lenses and a sensor of 100 lp/mm (5 microns).

Wavelength of the light is 0,000555mm.

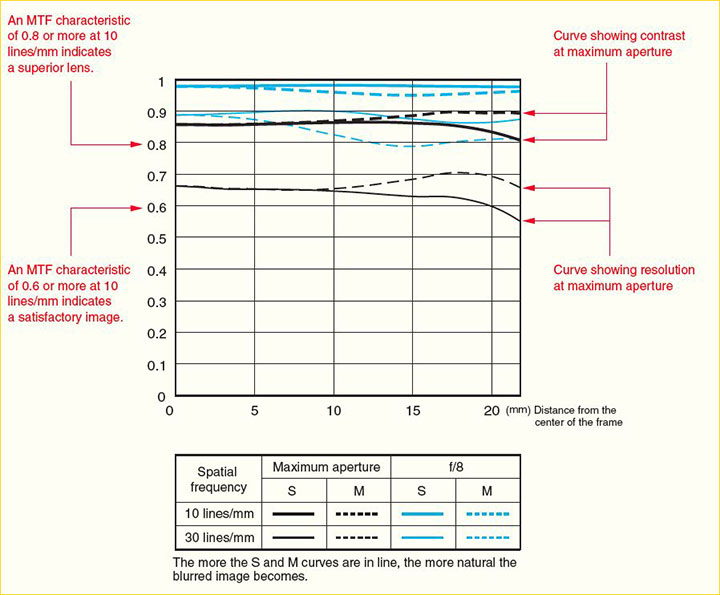

The second way of representing MTFs, commonly reported by lens manufacturers, consist in plotting contrast on the vertical axis (in percentage terms) and distance from the center of the frame (in millimeters) on the horizontal axis. In this case, we trace a curve for a set of selected resolution levels (usually, 5, 10, 20, 30 and 40 lp/mm), given an aperture of the lens. See Figure 3 for an example. It is the graphic representation of the MTF functions as presented by Canon. They select 10 lp/mm and 30 lp/mm resolutions and present curves for full aperture and f/8. Other brands can print curves for different sets of resolution numbers and aperture values, but the graph is in all cases the same.

Why those resolution values are selected? Carl Zeiss empirically studied how much detail is relevant for the subjective perception of quality in a photograph. They concluded that resolved detail on the negative beyond 40 lp/mm at a minimum 25% of contrast in the 35mm format has no significant effect on perceived image quality in small size prints (A4 or even larger)[3]. This is consistent with visual “legibility” values, related to the resolution necessary in a photograph for a correct enough reproduction of letters and words. Values in the range of 8-6 lp/mm at optimum viewing distance guarantee good sharpness perception on the print (Williams 1990, pp. 55-56). Even more, we are more sensible to intermediate values of detail, ranging from 0,5 to 2 lp/mm, as Bob Atkins explains.

The level of contrast provided by the system for the relevant range of detail is the key variable in determining the subjective perception of image quality[4]. On the other hand, the area under the MTF curves and at the left of the vertical red line in Figure 1 (the resolution of the sensor) is key for determining the maximum potential image quality of the system.

Figure 3. Canon typical MTF graph representation for lenses

MTF graphs offer more information than you can imagine. For instance, about bokeh, the lens reproduction of the out of focus areas of a photographic image. MTF graphs present curves for meridional (dashed) and sagittal (solid) oriented detail. The bokeh of the lens will be more harmonious the closer these lines are to each other. The MTF curves also inform us about color fringing caused by chromatic aberrations, this is, colors at the borders of high contrast change areas[5]. Only the tangential curve points to chromatic aberrations. Stopping down doesn’t mitigate the problem, and therefore the tangential curve doesn’t change (improve) when we close the diaphragm blades. Then, if the sagittal and tangential curves separate when you stop down, this is an indication of the presence of chromatic aberrations.

_____________________________________________________________

Formats make comparisons tricky

In order to make relevant comparisons among formats, a common point of reference must be adopted. For a smaller format to resolve the same detail in absolute terms than a bigger format (the same detail in a A3 print, for instance), it must resolve more detail per millimeter on the sensor (or negative). Then, lenses and sensors of smaller formats must have higher resolving power for approaching the detail captured by larger formats, but this comes at the price of lower signal-to-noise ratios (which translates to noise, narrower dynamic range or lower tonal richness)[6].

The point here is that you cannot directly compare the MTF curves of a lens designed for 35mm format, and a lens designed for APS-C or Four Thirds format. Even if you use a lens designed for 35mm format on a cropped sensor, the relative performance of that lens is difficult to measure, as Erwin Puts has explained. The 40 lp/mm resolution curves for 35mm format are equivalent to 60 lp/mm resolution curves in APS-C format (x1.5 crop factor), to 80 lp/mm curves in a Four Thirds format (x2 crop factor) and to 30 lp/mm curves in digital (cropped 645, this is, 36x48mm) medium format (x0.72 with respect to 35mm).

Olympus, for instance, presents 20 lp/mm and 60 lp/mm curves. These curves are directly comparable to typical 10 lp/mm and 30 lp/mm MTF curves for 35mm format, like those offered by Canon.

Perceived quality and the circle of confusion argument

Those resolution values aren’t the limit. Lenses can resolve finer details with good levels of contrast. See, for instance, Erwin Puts’ figures for several top quality 50mm lenses at f/5.6. In the table presented by him you can see resolutions of 160 lp/mm at 30-35% levels of contrast. However, it is argued that the visual acuity of the human eye puts a limit to the relevant resolving power of a photographic system. The final output is the print, and the naked eye can see detail until a point. This limit determines how much detail the lens and sensor or film must resolve. We will explain now why this line of reasoning isn’t correct for evaluating or comparing current digital equipment.

The maximum point size the human eye cannot see as a separated point in a print corresponds to a point of a particular size on the negative. This is called circle of confusion (CoC). The size of this circle implies a maximum resolution that we can profit from the photographic system. The above-mentioned 40 lp/mm number is closely related to the circle of confusion. It is also relevant for the depth of field formulas as well, and due to the same reasons: unsharp areas have spot sizes bigger than the circle of confusion. For depth of field calculations, tables and marks, the 30 microns spot size was adopted for the 35mm format a long time ago. This important concept rests on many assumptions regarding print size, format size, resolving power of the lens and film/sensor and visual acuity. Many of these sustaining assumptions, however, are wrong or outdated.

As Zeiss stated in Camera Lens News No. 1 (1997), regarding the circle of confusion assumptions for depth of field scales:

All the camera lens manufacturers in the world including Carl Zeiss have to adhere to the same principle and the international standard that is based upon it, when producing their depth of field scales and tables.

The normally satisfactory value (0.03mm for the 35mm format, 30 lp/mm) was standardized with the film image quality in mind at the time the standard was defined, which was long before World War II.

Meanwhile some decades have passed, todays color films easily resolve 120 lp/mm and more, with Kodak Ektar 25 and Royal Gold 25 leading the field at 200. Four-color printing processes have also improved vastly and so have our expectations about sharpness.

This is still absolutely okay as far as the large majority of photo amateurs is concerned, that take their photos without tripods and have them printed no larger than 4×6.

See how outdated are those assumed figures today, and accordingly, the typical depth of field scales. Visual acuity also was severely underestimated.

The separation between cones in the fovea is 0.0015 millimeters, which limits the maximum possible visual resolution to 20 arc-seconds. In practical terms, it is known that 30 arc-seconds are barely discernible. Adopting that number as a bound, 60 arc-seconds can be a good practical value for the average absolute visual acuity limit.

At an optimum viewing distance of 25cm, 60 arc-sec translates to black spots on bright background of 0.07 millimeters of diameter (bright spots on a dark background may be even smaller). Many take that number and make wrong calculations. A spot of 0.07 mm of diameter doesn’t correspond to a line of that width, but to a line pair of that width (see the Figure 4), because light spots have brightness differences from center to borders. This implies 14 lp/mm instead of the usual 7 lp/mm figure. But even that number is too conservative in many cases…

Figure 4: An isolated point, a line pair

In effect, a very small isolated spot cannot excite a sufficiently large number of retinal cones, but a line does. We can perceive more detail if it is formed by lines instead of spots, which implies that we can see a line thinner than the diameter of the minimum spot size perceivable. Another factor to be considered is contrast. The eye resolution also depends on the contrast between the bright areas and the dark areas. Even more, it is higher if we look at high contrast bright lines (or spots) on a dark background, and lower if we look at dark lines (or spots) on a bright background.

We can also see a thinner line if it is isolated from other adjacent lines.

We can see an isolated black line on bright background if the line is at least 0.001 millimeters thick (1 micron), considering an optimum (for resolution) viewing distance of 25 centimeters. This translates to 0.8 arc-seconds. An isolated bright line on a dark background can be seen irrespectively of its size, only depending on the brightness of the line.

When we look at groups of lines the visual resolution drops. The eye can resolve two black lines on bright background separately if the distance between them (from center to center) is at least 0.05 millimetres (40 arc-seconds, considering 25cm of viewing distance). This translates to 20 line pairs per millimetre. Even 25 line pairs per millimetre have some significant impact on perceived image quality. But consider this: for two bright lines on a dark background the resolution goes to 1 arc-second! These are figures are much higher than the 7 lp/mm usually presented as the visual limit.

Then, usual visual acuity figures aren’t accurate for all possible cases. But this isn’t the end of the story, because the assumed print size of reference is outdated as well. In effect, 8×10 prints (smaller than A4 size) cannot determine the current level of exigency for a photographic system. In the digital age, the typical print has increased to A3 or even larger sizes. Even more, image quality or image resolution comparisons are usually established based on visual inspection on the computer screen at an enlargement of 100%. When we see a photograph of 12 MP magnified at 100% on a computer screen with 96 ppi of resolution, we are seeing it at a size equivalent to a paper print larger than 1 meter x 70 centimetres (40 x 28 inches)!

Many people have experienced a comparative worsening in focus precision of lenses when used with digital equipment (focus shift, back focus, depth of field), and all those outdated conventions and assumptions might be a cause of it.

For all the above reasons, the resolving power of a digital system must be evaluated at its limit. There are practical considerations for which relative concepts like the circle of confusion or the subjective perception of quality are relevant, but this greatly depends on each particular case. For any meaningful comparison or evaluation, it is the performance at the limit that counts. Technical progress usually translates to marginal improvements, and these small steps are the base of competition and decisions on equipment investment.

Diffraction limits the resolution of the system

Accepting the resolution limit of the system as the point of reference, where is that limit? What determines it? Is it the lens or the sensor? Do sensors “outresolve” lenses’ capabilities? Before we are ready to understand the answer to that question, we should make clear several concepts related to sensor and lens resolving power and how they interact. The original signal that comes into the lens isn’t band-limited (except for test charts), but the lens puts a limit to the maximum frequency transferred to the detector, sensor or film. Digital capture presents several important differences with respect to film capture, mostly due to the regular arrangement of pixels compared to the random and irregular grain structure in film emulsions. The main consequence is that digital sensors are bandwidth-limited detectors, whereas film is “grain-limited”, so to speak[7]. The digital capture devices abruptly stop recording detail at the so-called Nyquist limit, as you can see in the Figure 1.

This property has consequences, and the most important one is related to the possibility of a correct “reconstruction” of the original signal, as transferred by the lens. The Nyquist-Shannon theorem says that in order to get an accurate reproduction of a continuous signal with a particular frequency the sampling frequency must be at least the double of that number (see this simulator). The theorem refers to units that must be translated to the particular case of digital imaging. The theorem says you need at least 2 samples per cycle, and this means two pixels per line pair[8].

Then, we can define the Nyquist limit as the maximum signal frequency (in cycles or line pairs per millimeter) that can be read or reproduced accurately with a particular sampling frequency. In the best of cases, you will get one cycle (or line pair) with two pixels, and that is the limit, the Nyquist limit. Thus, for a sensor with a sampling frequency of 160 pixels/mm, the Nyquist limit is 80 cycles/mm, this is, 80 line pairs per millimeter (see, for instance, this essay).

For normal photographic purposes 3 pixels per line pair (x1.5 Nyquist rate) or even 2 pixels per line pair (x1 Nyquist rate), do the job. Some tests made by scanning test charts show that, in fact, it could be necessary to have much more than just two pixels per line pair (see for instance the analysis of R. N. Clark) but the original Nyquist theorem doesn’t refer to this case and usual photographic subjects aren’t pairs of high contrast black and white lines on a plane, either[9]. This means that in practice the effective resolution of a sensor may be a mere 70% of its maximum possible value or Nyquist limit, depending on the subject and other variables. Bayer arrays make things more complex, as we will see.

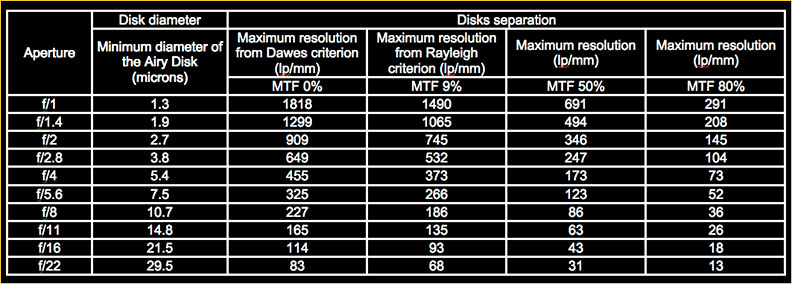

Table 1 reports the maximum resolution for a diffraction-limited (aberration free) lens at different apertures and for different levels of contrast (Norman Koren explains you how those numbers are obtained). See how diffraction increases the diameter of the Airy disks in the Table 1. In real photographic lenses, resolution values approach those presented in Table 1 only in first-class designs at medium apertures, when aberrations are reduced by stopping down. The sweet spot of any lens is at medium aperture values because aberrations are reduced and diffraction effects aren’t strong yet.

Table 1. Minimum Airy Disk diameter and corresponding maximum resolution

depending on disk separation for a diffraction-limited lens and green-yellow light (0.000555 mm wavelength)

The resolution values depend on the Airy disks diameters and separation. The size of the disk depends on the wavelength of the light and the aperture. We have selected an intermediate wavelength for which the eye is most sensitive, corresponding to a green-yellow color. Then, for a particular wavelength and aperture, the distance between two adjacent Airy disks determines the resolution and contrast level.

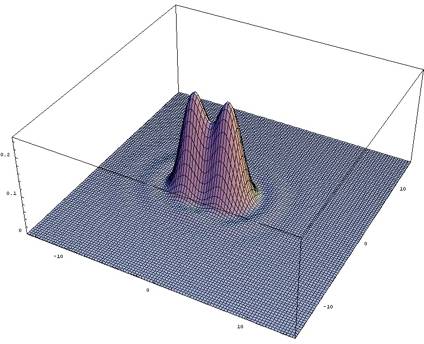

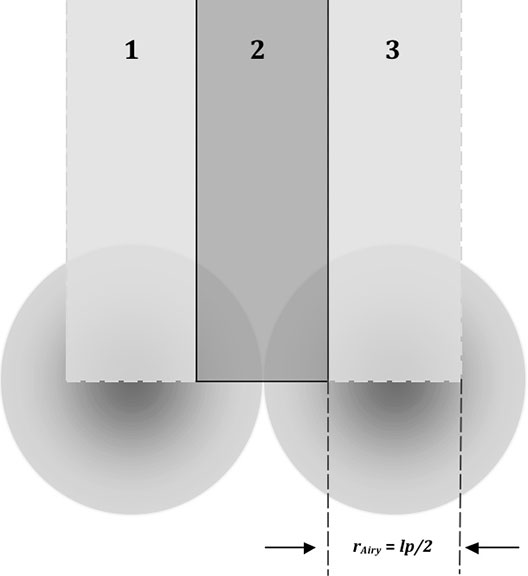

The Rayleigh criterion implies 9% contrast, fairly low, but enough for the human eye to separate two partially overlapped disks (bright stars on a dark background in a telescope). The first dark ring of one of the Airy patterns (first minimum) must be just under the center of the other disk (central maximum). Disk separation is one Airy disk radius. The overlapped zone includes decreasing signal from the two disks, and the resulting contrast (height differences between the summits at the center of the Airy disks and the deepest part of the sides) is fairly low. A 3-D representation of the Airy disks can help in visualizing it (Figure 5).

<

Figure 5. 3D representation of two partially overlapped Airy disks in the Rayleigh case.

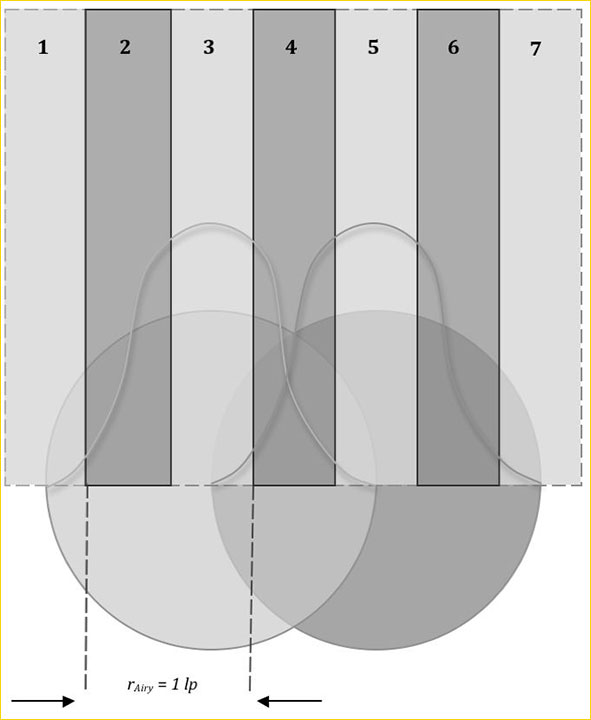

The following 2D graph represents two Airy disks with this Rayleigh separation, and the corresponding resolution in line pairs (Figure 6). See how the peaks (centers) and valleys (sides) of the disks determine a line pair. Remember the correspondence is a line pair per Airy disk radius.

Maximum resolution of 1 line pair per disk radius, and a low contrast level (MTF 9%).

The Rayleigh criterion – based on human visual acuity – isn’t adequate for estimating the resolving power of a lens that projects images on a sensor. The sensor needs more contrast and separation between Airy disks than the human eye. Foveal cones aren’t like pixels.

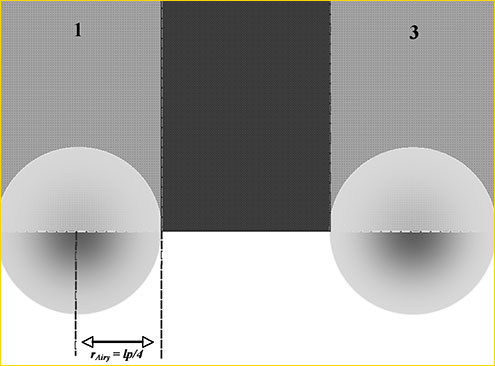

The following graph (Figure 7) corresponds to the ~50% contrast case. The Airy disks aren’t overlapped (the Airy patterns are), the resolution drops but the contrast increases compared to the Rayleigh case.

Figure 7. 3D representation of two adjacent Airy disks in the MTF ~50% case.

The two-dimensional representation helps in visualizing the relation between resolution, contrast and disks separation. The Figure 8 provides one such representation for this new case. You can define few line pairs, but at higher contrast levels. There isn’t any overlapping of Airy disks, but it is the case with the concentric rings that surround them. Now you can resolve a maximum of one line pair per Airy disk diameter:

Figure 8: MTF ~50% case. The Airy disk separation is null. You get 1 line pair per Airy disk diameter.

The ~80% case is presented in the Figure 9. You may resolve ½ line pairs per Airy disk diameter with a separation of an Airy disk diameter (there are some overlapping of the Airy patterns in the area 2, which detects the “black” line, and the resulting contrast isn’t maximum).

You get 1/2 line pair per Airy disk diameter, but at a fairly higher contrast level.

The size and separation between Airy disks imposes a particular sampling interval, this is, a pixel spacing or pixel pitch. When the pixel is too big some detail is lost and the system is resolution-limited. If the pixel is too small the system doesn’t resolve more detail, and it is diffraction limited. It seems to be a minimum contrast threshold, and therefore a minimum disk separation, which translates to a maximum resolvable signal frequency and a minimum pixel pitch.

You will need a pixel with a diagonal at least as large as the diameter of the Airy disk in order to detect the spot size, its position and brightness. Therefore, in theory, 1.4 times the pixel size (the length of the diagonal of the squared pixel) must be equal to the diameter of the Airy disk. This would imply that the pixel diagonal is the diameter of the sensor’s circle of confusion.

However, an Airy disk can define a line pair (see Figure 4), and you will need two pixels in order to extract this linear information from the spots and to avoid spatial aliasing. Then, the general rule for an optimal sampling is 2 pixels per Airy disk diameter in monochrome sensors, which match the Nyquist rate of 2 pixels per line pair. In practice, higher sampling frequencies don’t increase the resolved detail[10].

We now have all the data necessary for the calculation of the optimal pixel pitch, based on the minimum Airy disk diameter for a diffraction-limited lens.

Table 2. Minimum Airy Disk diameter and optimal sampling frequency/pixel size for different wavelengths of the light and a diffraction limited lens.

(1) Airy disk diameter in microns.

(2) Sampling frequency (in pixels per millimetre) considering 2 pixel per Airy disk diameter. Optimal for monochromatic sensors and Bayer sensors with anti-alias filters.

(3) Sampling frequency (in pixels per millimetre) considering 4 pixel per Airy disk diameter. Optimal for Bayer sensors.

(4) Pixel pitch for the case (2), in microns

(5) Pixel pitch for the case (3), in microns

At this point you know that diffraction-limited lenses aren’t the normal case. Only a few top-class lenses approach the resolutions presented in the Table 1, and even that only by stopping down to medium aperture values. Consider f/5.6 as a reference, although it is really difficult to find a diffraction-limited lens at that aperture[11]. Diffraction-limited resolutions for f/8 or f/11 aperture values are more realistic for mass-produced lenses. The wavelength of the light is important as well. The green is representative of daylight and corresponds to the maximum sensitivity of the eye. Typical DSRL sensors have pixels in the range from 5 to 6.4 microns. For instance, the Pentax K20D has 15.1 million pixels, and a pixel pitch of 4.9 microns. The Olympus cameras based on 10 MP sensors have pixels of 4.7 microns. The Canon 450D has 5.1 micron pixels, but the Canon 1Ds Mark III has pixels of 6.4 microns. The Nikon D300 has pixels of 5.3 microns, and the Nikon D3 8.5 microns. Sony’s new 25 MP sensor of 35mm format has 5.9 micron pixels, and the Alpha 350 has 14.9 millions of 5 micron pixels. Remember you won’t get more resolution when the diameter of the Airy disk is 1.4 to 2 times larger than the pixel pitch.

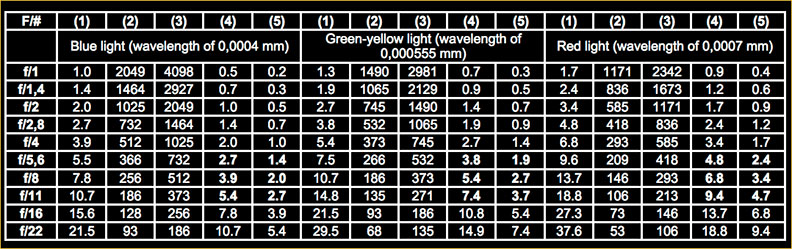

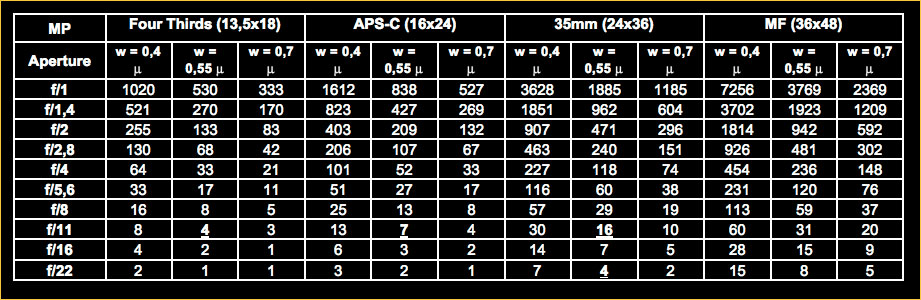

The Table 3 presents how many pixels correspond to different formats taking the pixel pitch of the Table 2 as a reference. We have made calculations for different wavelengths, aperture values and format sizes.

Table 3 . Number of pixels of optimal size for different apertures of a diffraction limited lens,

wavelengths of the light and formats, considering 2 pixels per Airy disk diameter

You have all the data at hand, but take the green-yellow light and f/8-f/11 aperture values as a reference. It represents a realistic, not too demanding case. Consider a 35mm system with a lens at f/11. At best, the maximum resolution you will get is equivalent to 16 MP, even if your camera has 22 or 25 MP. In the case of an APS-C based system the limit goes to 7 MP, and 4 MP considering a Four Thirds format. Stopping down to f/22 the limit of the effective resolution of the 35mm based system goes to 4 MP!

See again the Figure 2: the lens limits the resolution of the 5 microns pixel based system with an aperture of f/22, but it is also the case for f/16, f/11 or even f/8. That pixel pitch leads to a 10 MP Four Thirds sensor, a 15 APS-C sensor, a 35 MP sensor of 35mm format and a 70 MP sensor of 36x48mm dimension. Compare now those numbers with the values presented in Table 3. Only for highly corrected lenses (with better performance at f/5.6 than f/8) do higher sensor resolutions make sense. For instance, you can put 60 million of pixels into a 35mm sensor, but only a diffraction-limited lens at f/5.6 would take advantage of it. The price to pay is in the form of huge files, and comparatively low signal to noise ratios (which translates to noise, narrower dynamic range, poorer tonal variability… see, for instance, the Olympus E-3 reviews at dpreview.com and at The Luminous Landscape). The only alternative way for more detail is more capture surface, this is, a larger format, but aberrations are harder to control for larger light circles (see the “Y” variable in this table).

In addition to increasing resolution, the new market trend is to provide also good noise handling, broader dynamic range, wider tonal variability, etc. To increase the number of pixels in this context is becoming much harder for small format sensors, and senseless from a resolution point of view.

Conclusions

So, do sensors outresolve lenses? It depends on the lens you use, the properties of the light, the aperture and the format. Small format sensors may have surpassed the limit, this is, in most cases they are lens-limited in terms of resolution. It is easier to correct aberrations for a smaller light circle though, so you can approach diffraction-limited resolutions for lower f-numbers. The signal-to-noise ratio, however, imposes an inflexible limit to the effective resolution of the whole system, mostly due to photon shot noise.

Sensors for larger formats are approaching the diffraction limit of real lenses, and it is more difficult to get high levels of aberration suppression for them. The point is that you cannot fully exploit the resolution potential of high-resolution sensors with regular mass-produced lenses, particularly for larger formats.

You cannot compare the limits of two different photographic systems looking at a print because the variables that determine the subjective perception come into play. Different systems can provide comparable results on paper under certain conditions (the circle of confusion reasoning explains how that is possible), but the limit of a system must be evaluated considering the pixel as the minimum circle of confusion.

Acknowledgments: I would like to thank Peter Burns, Norman Koren, Roger N. Clark, Brian A. Wandell and Nathan Myhrvold for their helpful comments and suggestions.

Rubén Osuna is a University Professor at the UNED, Madrid. Efraín García is a professional fashion and advertising photographer.

June – 2008

[1] See Williams, J. 1990. “Image Clarity: High-Resolution Photography“, Focal Press. It is a fantastic reference for the image quality in photography.

[2] There are many kinds of aberrations. The third order or Seidel aberrations are seven: the geometric or monochromatic ones (spherical, coma, field curvature, astigmatism and distortion, of pincushion and barrel type); and the chromatic ones (of axial and lateral type). There are other aberrations of superior orders: the 9 fifth order aberrations, or Schwarzschild aberrations; the 14 seventh order aberrations…). The most important aberrations for photography are those of third and fifth order. Fifth order aberrations become more serious as we increase the angle of vision and the lens speed. These aberrations affect the image quality independent of the degree of correction of the Seidel aberrations. This forces a joint treatment of both types of aberrations. We recommend Paul van Walrees pages on optics for a more detailed explanation of these topics.

[3] See, for instance, Kämmerer, J. 1979. When is it advisable to improve the quality of camera lenses? Optics & Photography Symposium, Les Baux, 1979 (->). Also Norman Koren’s summary. A key reference on image quality evaluation is Schade, O.H. 1975. Image Quality: A Comparison of Photographic and Television Systems. RCA Laboratories, Princeton. Reprinted in Society of Motion Picture and Television Engineers Journal, 96: 567, June 1987.

[4] See Heynacher, E. Köber, F. 1969. Resolving Power and Contrast, Zeiss Information, 51. For instance, Leica’s design rule for lenses is to provide 50 lp/mm with a 50% of contrast or more (see Erwin Puts for this).

[5] It points to the presence of a problem, but it is not a sufficient or necessary indicator of color fringing problems in digital images. See Norman Koren’s analysis for a detailed explanation.

[6] Higher resolution lenses for smaller formats are easy to achieve just because several aberrations grow with the size of the format.

[7] Another consequence is related to visible “noise”. The finer the film grain is, the lower is the level of visible “noise” in the captured image. On the contrary, the smaller is the sensor pixel, the more prominent is the “noise”. For an analysis of this digital trade-off between pixel size and signal-to-noise see Chen, T Catrysse, P El Gamal, A Wandell, B 2000. How Small Should Pixel Size Be? Proc. SPIE Vol. 3965, p. 451-459 (->). The human vision is limited by the distribution and separation of cones at the fovea, as we have seen. Another great reference for the subjet of noise in digital cameras is the paper written by Emil Martinec and published on-line, “Noise, Dynamic Range and Bit Depth in Digital SLRs”.

[8] Sampling is expressed in “samples per millimeter”, and one sample corresponds to one pixel. Signal frequency units are “cycles per millimeter”, and a cycle corresponds to a line pair per millimeter. Due to this reason the Nyquist theorem says the sampling frequency (in samples) must be at least the double of the signal frequency (in cycles), this is, at least two samples per one cycle, which corresponds to two pixels per line pair. We call this ratio the Nyquist rate.

[9] Sampling is the reduction of a continuous signal to a discrete signal, like a sound wave that is represented by a set of values equally spaced in time in audio equipment. The Nyquist theorem only applies to this kind of continuous bandlimited signals. However, the line pairs of a resolution test chart don’t provide a continuous signal to the sensor (but a square-wave signal instead). Due to this reason Clark’s scanning experiment needed a sampling frequency much higher than the Nyquist rate. For the discrete signal case, an intuitive explanation of the Nyquist theorem is provided by R.N. Clark: you try to resolve pairs of lines (one black, one white), and in order to do so you need two pixels, one for each line. This is true only if the lines and the pixels are perfectly aligned, this is, if signal and sampling are in phase. But we cannot expect it, so the number of pixels per line pair should be higher than two.

[10] This implies 4 pixels for the complete Airy disk. In Bayer type sensors the sampling unit is not the pixel, but the 2×2 matrix formed by 2 green, 1 red and 1 blue pixels. Then, in order to avoid chromatic aliasing the sampling must be doubled, this is, 4 pixels per Airy disk diameter (16 pixels for a complete disk). Anti-aliasing filters help in avoiding aliasing artefacts for lower sampling frequencies. See Catrysse, P. Wandell, B. 2005. Roadmap for CMOS image sensors: Moore meets Planck and Sommerfeld, Proceedings of the SPIE, Volume 5678, pp. 1-13, page 6, (->); and Rhodes et al 2004. CMOS Imager Technology Shrinks and Image Performance, IEEE Workshop on Microelectronics and Electron Devices, pp. 7–18, page 9 (->).

[11] The Leica APO-Telyt-R 280mm f/4 is reported to be a diffraction-limited lens. This is a rare case, and a very expensive lens.

Read this story and all the best stories on The Luminous Landscape

The author has made this story available to Luminous Landscape members only. Upgrade to get instant access to this story and other benefits available only to members.

Why choose us?

Luminous-Landscape is a membership site. Our website contains over 5300 articles on almost every topic, camera, lens and printer you can imagine. Our membership model is simple, just $2 a month ($24.00 USD a year). This $24 gains you access to a wealth of information including all our past and future video tutorials on such topics as Lightroom, Capture One, Printing, file management and dozens of interviews and travel videos.

- New Articles every few days

- All original content found nowhere else on the web

- No Pop Up Google Sense ads – Our advertisers are photo related

- Download/stream video to any device

- NEW videos monthly

- Top well-known photographer contributors

- Posts from industry leaders

- Speciality Photography Workshops

- Mobile device scalable

- Exclusive video interviews

- Special vendor offers for members

- Hands On Product reviews

- FREE – User Forum. One of the most read user forums on the internet

- Access to our community Buy and Sell pages; for members only.