By Charles Sidney Johnson, Jr.

Questions like this and misleading answers fill the photographic forums. One “expert” says that lenses are not equivalent to anything else, and another claims that a big sensor is necessary to get a small depth-of-field. These are at best half-truths. So what is correct?

It is easier to understand the important points by considering what is necessary to obtain an “equivalent” or identical image with different camera/sensor sizes. In order to obtain an equivalent imageallof the following properties must be the same:

- Angle of view (field of view).

- Perspective

- Depth-of-field (DoF)

- Diffraction broadening

- Shutter speed/exposure

According to this definition, two equivalent images will appear to be the same in all aspects; and the observer will not be able to detect any evidence of the size camera/sensor that was used. This still leaves “noise,” pixel count, and lens quality to be considered later. The starting point is to recognize that typical cameras have sensors that range in size from about 4mm by 6mm to 24mm by 36mm or a factor of six in width. Let’s call the small sensor Point-and-Shoot (PS) and the larger sensor Full Frame (FF). Of course, we will have to enlarge the image from the PS sensor a factor of six more than the image from the FF sensor to be able to compare them.

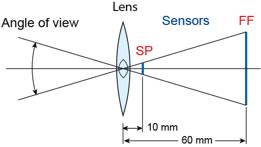

Angle-of-view: We can maintain the angle-of-view by scaling the focal length (f) as shown in Fig. 1. If the lens on the FF camera is set for 60mm then the PS must be set at 60mm/6 = 10mm.

Figure 1: The horizontal angle of view for FF sensor (focal length = 60mm) and PS sensor (focal length= = 10mm).

By scaling the focal length so that it is proportional to the sensor width, we have obtained an image that is similar in one way; but it may not really be equivalent when the other properties are considered. To understand how to make an equivalent image with the PS and FF cameras, we need to investigate which properties can be scaled and which cannot. Of course, nothing about the real world changes when we use a different sensor, and in particular there is no change in:

- the desired distance between the object and the lens

- the size of the object to be photographed

- the wavelength of light

With these invariants in mind, we can proceed through the list of image properties.

Perspective: This is an easy item to address because “perspective” depends only on the position of the camera lens and is unaffected by the focal length. The focal length has no effect on the perspective and only determines the size of the image. No matter what size camera/sensor we use, we just have to position the lens in the same place. The idea that we can change perspective by switching to a wide angle lens is, therefore, incorrect. The perspective only changes when we move relative to the subject.

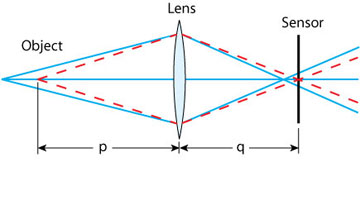

Depth-of-Field: Actually, only objects at one distance from the camera are in perfect focus, but the human eye has limitations; and objects at other distances may appear to be in focus in a photograph. The range of distances where things are in acceptable focus defines what we mean byDoF. Figure 2 shows that a point source at the distance p in front of the lens is focused exactly at the distance q behind the lens,i.e.the red dotted lines come together at the sensor. The blue lines from another point more distant from the lens are focused at a point in front of the sensor and when they reach the sensor they have diverged to define a circle. Rays from an object closer to the lens than the distance p would focus behind the sensor and would also define a circle on the sensor. These circles are called circles of confusion, CoC. If the CoCis small enough, the object is said to be in focus and the distance to the object falls within the DoF. The subjective part of this calculation involves deciding on an acceptable CoC. After that is done, the remainder of the calculation is simple, albeit tedious, geometry.

Figure 2:

Light rays from an object to a detector.

However, there is a caveat. The type of DoF computation depends on the way photographs will be viewed! In principle a photograph should always be viewed from its proper perspective point. That is to say, the angle subtended by the photograph at the eye should be the same as the field of view of the lens used to take the photograph. Therefore, photographs taken with a wide angle lens should be held close to the eye so as to fill much of the field of view while telephoto photographs should be held farther away. It is sometimes forgotten that perspective in a photograph depends only on the position of the lens relative to the subject (object).

So how will the photographs be viewed? In fact, prints are usually viewed from10”to12”regardless of the focal length of lens used to make the photograph. When mounted prints are viewed, observers typically stand about the same distance from all prints. Observers generally don’t know what focal length lens was used, and they simply react to the apparent distortions present when a wide angle photograph is viewed from a distance greater than the perspective point. Similarly there is an apparent flattening effect when telephoto photographs are viewed too close to the eye. Also, in photographic shows the audience remains seated at the same distance from all prints and from the projection screen. Under usual viewing conditions, it is appropriate to compute the DoF with a constant CoC in the image regardless of the focal length of the lens.

So what is theCoC? This choice depends on how good the human eye is at resolving closely spaced dots or lines? Various authors quote the acuity of the human eye in the range of0.5to1.5minutes of arc (1 deg = 60 min of arc). To put this information in context, I note that 1 minute of arc corresponds to the diameter of a dime (18 mm) viewed from 68 yards or 1 part in 3500. Of course, the contrast in photographs is less than that in eye tests that involve black lines with white backgrounds, so we need to relax this requirement a bit. I will follow standard practice and chose1part in1500as the defining angle. (This is the most conservative value suggested by Canon though the “Zeiss formula” uses 1730.) What this means is that the CoC for a given sensor is taken to be sensor diagonal length divided by 1500. For the FF sensor (or 35 mm film) this gives a CoC of about 30 micrometers while for the PS sensor the CoC is only5micrometers. There is nothing sacred about this choice of CoC. If very large prints are to be made, perhaps the CoC should be reduced; and vice versa if only 4″ by 6″ prints are needed one can get away with a larger CoC. Note that for an 11″ by 14″ print theCoCis about 0.3mm .

I start with the distance to the object (p), the selected focal length(f), and the CoC for each sensor size. This provides enough information for the calculation of the DoFf or each value of the lens aperture d or the f-number (N)since the aperture equals the focal length divided by theN,i.e.d = f / N . The necessary DoF equations, derived with geometry, are well-known and relatively simple; and the results also well-known. Namely, the predictedDoF’sincrease without limit to cover all distances as theNis increased without limit even though the actual resolution will be degraded by diffraction effects. Therefore, the maximum usefulDoFis limited by diffraction; and it is necessary that we quantify diffraction effects before completing this calculation.

Diffraction Broadening:Diffraction is ubiquitous in nature, but it is usually considered to be a curiosity. Sometimes diffraction is so obvious that it cannot be ignored. We have all seen the rainbow of colors that appear when white light is diffracted from the surface of a CD or DVD. This occurs just because the rows of data pits are separated by distances close to the wavelength of light. (Actually the separation on a DVD is650nm, the wavelength of red light.) When we go back to first principles, we see that everything having to do with the propagation of light depends on the fact that light is an electromagnetic wave. Diffraction effects become noticeable when light waves interact with small apertures where all of the light rays are constrained to be close together.

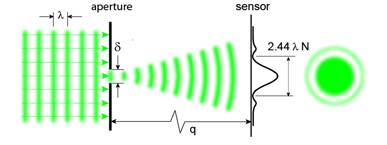

The distribution of intensity on the sensor for a point source of light depends on the size and shape of the aperture. For a circular aperture the image has a bright central dot encircled by faint rings as illustrated in Fig. 3. This pattern, the Airy disk, is well-known in astronomy.

Figure 3:

The effect of a small aperture on light incident from the left.

The vertical scale is greatly exaggerated to reveal the intensity distribution in the diffraction spot.

The radius at which the intensity of the central spot reaches zero,i.e.the first dark ring, serves to define the diffraction spot size. With this definition, the diameter of the diffraction spot on the sensor is 2.44 l where is the wavelength of the incident light andNis the f-number.

The circle-of-confusion (CoC)is the key to this analysis. I stated that theCoChas been chosen to define the region where the focus is good enough to be included in theDoF. Now I assert that high-resolution in theDoFregion also requires that the diffraction spot be less than or equal to theCoC. Thisupper limiton the diffraction spot size requires that 2.44 l N = CoC = Diag / 1500, and we immediately have a determination of the maximum usefulNfor any wavelength l . Visible light has wavelengths in the range400 nm(blue) to700 nm(red); and, therefore, diffraction effects are greater with red light. For the calculation ofN,I have selected l = 555 nm, the wavelength that corresponds to the region of maximum sensitivity of the human eye. The result is surprisingly simple. To compute the largest usefulNvalue for any camera, simply determine the diagonal dimension of the sensor inmmand multiply that number by 0.5. The computed values ofN,CoC,and scaled f for various sensor sizes are shown in Table 1.

Table 1: f-numbers (N), circles-of-confusion (CoC) for some common sensors.

| Sensor/film Type | Sensor (Diag/mm) | f-number (N) | CoC( m mmicron) | f (Focal length)/ fFF |

|---|---|---|---|---|

| 1/2.5” | 7.18 | 3.5 | 4.8 | 0.17 |

| 1/1.8” | 8.93 | 4.4 | 5.9 | 0.21 |

| 2/3” | 11.0 | 5.4 | 7.3 | 0.25 |

| 4/3” | 22.5 | 11 | 15 | 0.52 |

| APS-C | 28.4 | 14 | 19 | 0.65 |

| 35mm (FF) | 43.4 | 21 | 29 | 1.0 |

| 6 x 7 | 92.2 | 45 | 61 | 2.1 |

HerefFFrefers to the focal length of the lens on the full frame camera that serves as a reference. The important point is that the value ofNcomputed for a given sensor size is the largest value that can be used without degrading the image. This calculation applies to an ideal lens where the performance is diffraction limited. Real lenses have various aberrations and usually show the best resolution at a couple ofNstops from the largest aperture. However, asNis increased (aperture is deceased), tests show that performance decreases primarily because of diffraction. Note: If a photograph is cropped, theCoCshould be adjusted to the new diagonal,i.e.reduced.

Now given the shooting distance (p), the focal length (f) andCoCfor each sensor size, and the maximumNfrom the diffraction computation (Table 1), we have everything required to determine theDoF. For this calculation I have used aDoFequation that is appropriate for a constantCoCin the image. This equation is derived in standard optics texts, and I have chosen an approximate form that is accurate when the distancepis much greater than the focal lengthfand theDoFdoes not extend to infinity.

DoF = 2 p 2 N CoC/ f 2= 2 p 2 CoC/( f d )

What we find is that theDoFis the same for all sensor sizes because of scaling of the focal length and f-number. Also, the lens aperture dremains the same regardless of sensor size. For example, suppose that the image of a 2 ft object completely fills the diagonal dimension of the sensor when photographed from 5 ft. For a FF camera this requires a focal length of about100 mm. The optimum f-number is21, the aperture is5mm,and theDoFturns out to be about10 in. In contrast to this, the PS camera with the smallest sensor achieves an equivalent image with an18 mmfocal length and an f-number of3.5.

Shutter speed/exposure:This final property is very interesting. Suppose that the FF camera in the last example required1/30 secat an ISO sensitivity of800for an adequate exposure. The PS camera with f-number3.5admits (21/3.5) 2 = 36times more light and can achieve the same exposure with a shutter speed of1/1000 sec. This calculation depends on the idea that the area of the aperture quadruples when the f-number decreases by a factor of two. On the other hand, the equivalent photograph requires that we use the same shutter speed to make sure that motional blur is the same. Therefore, in order to obtain the proper exposure, we must decrease the ISO sensitivity of the PS camera by a factor of36to obtain an ISO number of22. Of course, most cameras cannot go this low in sensitivity, but maybe we can select50. This, of course, demonstrates that small sensors do not have to perform well at high ISO settings in order to obtain an equivalent image with maximum usefulDoF.

___________________________________________________________

Comments and Conclusions

I have demonstrated that sensors with different sizes can be used to make identical imagesif the appropriate ranges of focal lengths, f-numbers, and ISO sensitivities are available.This also assumes that lenses have sufficient resolving power and that the pixel density is high enough not to degrade resolution. The main points are the following:

1. The sensor size alone determines the maximum useful f-number (N); and, in fact, the maximum f-number for high-resolution photography is given by 0.5 times(sensor diagonal in mm).

2. We can take an essentially identical photograph with any sensor size by scaling the focal length, the f-number, and the ISO sensitivity.

3. The smallest sensor we considered (denoted1/2.5”) achieves maximum usefulDoFat an f-number ofN=3.5while the full frame 35 mm sensor requiresN=21for a factor of 36 difference in transmitted light. If the full frame sensor gives the same signal/noise ratio (S/N) at ISO sensitivity1600as the small sensor does at ISO 80, the small sensor can still use a higher shutter speed. A PS sensor that could give low noise at ISO 800 or 1600 would appear to have a real advantage over FF sensors.

4. If maximizing theDoFis not the aim, larger sensors clearly win because of their ISO sensitivity advantage. A fast lens (N=1.4) with a full frame detector is impossible to match with the small sensor. ProbablyN=1is the maximum aperture we can expect with a small sensor, and no company at present even offersN=2. The take home lesson is that small sensors should be coupled with large aperture lenses,i.e.smallNvalues. Also, small sensors that support large ISO sensitivities should be sought. The vendors are showing some interest in higher sensitivities, but larger lenses are in conflict with their drive to smaller cameras. Unfortunately, none of the available PS cameras offer very high quality lenses.

5. What about pixel count? Doesn’t that also influence resolution? Each pixel typically contains one sensor element so nothing smaller than one pixel can be resolved. It is actually worse than that, because in most sensors each pixel only records the intensity of one color. It is then necessary in hardware or with a RAW converter program to combine information from neighboring pixels to assign three color values (R, G, and B) to each pixel site. As a rule of thumb, we should double the size of a pixel to approximate its effect on resolution. In other words when the number of RGB pixels on the diagonal divided by two approaches 1500, the number used to define theCOC, and pixel size begins to influence resolution. A 10 megapixel sensor with a 2:3 aspect ratio has about 4600 pixels on the diagonal, and the safety margin is not very large.

6. The fundamental limitation onDoFis imposed by diffraction effects. Does technology offer any hope in the future for circumventing the diffraction barrier? The answer sounds almost like science fiction. In principle materials with negative refractive index (NIM) make “perfect” lenses that are not limited by diffraction. No naturally occurring NIM exists, but artificial materials (metamaterials) have been fabricated that display negative refractive index for microwaves. In 2005 a NIM material, consisting of minuscule gold rods imbedded in glass, was reported that works with near IR wavelengths. No NIM’s are in sight for visible light; and if one is constructed it will probably only be effective at one wavelength – but it is fun to fantasize. NIM’s are hot topics in the optics world, and there are many papers exploring their weird properties.

___________________________________________________________

Appendix: An Illustration of maximumDoFwith Different Sensors

I have compared two readily available digital cameras with very different sensor sizes by photographing a table top scene with each camera. The perspective (location of the lens relative to the subject) was nearly identical for each camera and the focal lengths were adjusted to give the same angle of view. In each case,6”in the plane of focus represented about 40% of the length of the sensor diagonal and the lens to object distance was about32 i. In addition the f-numbers (N) were computed as described above by multiplying the sensor diagonal by 0.5. The results are shown in Figs. 3 and 4. It was necessary to crop these photos to give the same aspect ratios since the APS sensor is 3:2 while the 1/1.8” sensor is about 4:3.

Figure 4: Photograph taken with a Canon S80 8 megapixel camera.

The maximum focal length for the lens was used.

Figure 5:

Photograph taken with a Canon 10D 6 megapixel camera with a 28–135 mm zoom lens.

The focal length of the lens was adjusted to match the angle of view of the S80.

he computed parameters for the S80 were f = 19 mm and N = 4.4; however, I settled for f = 20.7 mm, the maximum focal length, and N = 5.3, the minimum value available at full zoom. For the 10D, the computed values were aboutf = 56 mmandN = 14. The focal length was set to approximately57 mm, andN = 14was approximated by the availableN = 16value. The ISO sensitivity was set to50on the S80 and800on the 10D to compensate for the difference inNvalues. Since the ratio of light intensity at the sensors was about (16/5.3) 2 = 9,800was a higher than necessary ISO value for the 10D, but it shows that the larger camera can compete even with much higherNvalues.

The photographs appear to be almost identical when enlarged and examined on a computer screen, and theDoFwas found to be roughly equal to the computed value of4.0 cm. Since the S80 image showed signs of in-camera sharpening, the 10D image was given minimal sharpening to account for the anti-aliasing filter (Photoshop: unsharp mask with radius = 0.3 and strength = 300 ). The 10D image showed more noise than the S80 image because of the much higher ISO value used. Also, the S80 image exhibited slightly higher resolution probably because of the larger pixel count.

So what happens when the f-numberNis increased? This question was investigated as follows. An image was obtained with the S80 at N=8 N = 8, the largest available value. Also, an image was obtained with the 10D at N = 22. As expected, both of these images are slightly softer in the plane of best focus than the images obtained at the computedNvalues. Therefore, theCoCvalues and the computedNvalues are reasonable.

One obvious conclusion is that the S80 images are remarkably good, but the range of settings is severely limited. At full zoom, all of the f-number range (N= 5.3to8) suffers from diffraction limited resolution, and there is no possibility to limit theDoFif that is desired.

___________________________________________________________

References

Elementary Optics :

Kingslake, R.Lenses in Photography. 1-246. 1951. Garden City Books.

Fowles, G. R.,Introduction to Modern Optics. 2 nd Ed. 1-328, 1975. Dover Publications, Inc.

Why we need lenses, how they work,etc.

Feynman, R. P.,QED, The Strange Theory of Light and Matter. 1-158. 1985. Princeton Science Library.

(Probably the best science book written for laymen in the 20 th century)

Sensors:

The Glossary in:http://www.dpreview.com/

(This is a gold mine of information about digital cameras.)

Metamaterials and Negative Refractive index:

D. R. Smith, J. B. Pendry, and M. C. K. Wiltshire,Science,305, 788-792 (2004).

© 2007, Charles Sidney Johnson, Jr.

___________________________________________________________

Charles S. Johnson, Jr. is Professor of Chemistry Emeritus at the University of North Carolina at Chapel Hill. He has authored approximately 150 research papers as well as books on laser light scattering and quantum mechanics. His interest in photography goes back to the 1950’s when he started documenting his high school and hometown and doing freelance work with a Rolleicord III and a Zeiss Ikonta 35. However, for many years his career in science left little time for serious photography. Now in addition to enjoying nature and travel photography as a hobby, he is making use of his science background to write a book that reveals the science behind photography. This book deals with fundamental questions such as the behavior of light, how lenses work, sensors versus the human eye, our perception of color, and the art of composition. He is also presenting some of this material in essays on timely topics in digital photography. The book and the essays are, of course, aimed at those individuals who enjoy understanding how things work.

___________________________________________________________

Another Perspective – By Nathan Myhrvold

Nathan Myhrvoldwas formerly formerly Chief Technology Officer at Microsoft, and is co-founder of Intellectual Ventures.

I think that Charles Johnson’s article on DOF and sensor issues is excellent and will inform the discussion of the relative merits of various sensors and cameras. It covers the basic optical facts very well.

However, I think that there are some additional points that can help clarify the issue. Johnson’s treatment considers COF from the traditional photographic way – the minimum resolution difference that can be seen in a final print. If you know the final print size then you can calculate COF based on the print and some assumption about human vision.

There is a different approach one can take in the new digital world – ask what is the maximum resolution that a given image sensor can produce, and then match the COF to that. This is more in the spirit of the way many people photograph – i.e. how can I get the best out of my equipment? Later on I might make a wallet sized photo (in which case resolution hardly matters), but I might make a large print and I want to know the maximum quality I can get. The only reason to get concerned about digital sensor quality these days is when you consider maximum size prints – any camera can do OK with very small prints. The same is true for DOF.

While Johnson’s treatment works for a fixed size print, the converse is to consider how to get the maximum image quality – i.e. the biggest and best print that you can possibly get. From this standpoint you don’t ask about the final print size, instead you ask what is the best quality that my camera can possibly render. As an example, I use the Canon EOS 1Ds Mark II. It has a pixel size of 7.2 microns, which is 7200 nanometers. However, the pixels are arranged in a Bayer array – half of the pixel are sensitive only to green light, while the other half are split 25% to red and 25% to blue.

The camera will achieve diffraction limited resolution when the Airy disk diffraction formula 2.44 * N * Lambda is equal to the effective pixel size. In the terms of Johnson’s article, this means that the size of the COF is the pixel size. For green light (where the wavelength Lambda = 550nm) which is where human vision is most sensitive (and is used by Johnson’s article), the most conservative value of COF is equal to the pixel diagonal, which is 1.414 (square root of 2) times the pixel size. For red light (Lambda = 700 nm) and blue light (Lambda = 400 nm) we can use 2 times the pixel size, since they are spaced further apart.

In the case of the Canon EOS 1Ds Mark II, the green light calculation suggests that N = 7.58, red gives N = 8.43, and blue gives N = 14.75. This shows that green light is not only the most sensitive – it is also the most demanding, and we can basically use it (as Johnson does in his article). Note however, red light is at a very similar value. Blue light, because it is so much smaller, is not a factor in the diffraction limit. The bottom line is that the Canon EOS 1Ds Mark II will suffer lower resolution from diffraction at any f-stop above f/8, at which point both red and green light are at their diffraction limited resolution.

Another way to say this is that if you stop down below f/8 with this camera, you will reduce the resolution everywhere – including in the plane of perfect focus. If you take Johnson’s criterion that COF should be equal to the diffraction limit, then your maximum depth of field occurs at f/8.

The f/8 maximum is substantially different than Johnson’s criterion – the reason is that he takes the traditional formula of diagonal / 1500 to get DOF, yielding f/21 as the maximum depth of field point. However, this is equivalent to saying that the COF on the sensor is 19.2 microns (29 micron COF divided by root 2), or about 2.76 times bigger in each direction than the actual pixel size. Put another way, the “full frame” results that Johnson calculates effectively assume that there are 2 million pixels in the full frame sensor. That is a factor of 7.66 fewer than I actually have in my camera.

Now, I don’t think anybody would be very excited about turning their EOS 1Ds Mark II, or Canon 5D or other full frame camera into a 2 megapixel camera. It sounds pretty drastic, but that is exactly what you do when you stop down to f/22 – the diffraction limit imposes this condition. If you shoot with a full frame 24 x 36 sensor at f/22 you are throwing away a lot of resolution. There is no getting around this – it is fundamental in the physics of light.

This comes as a shock to many photographers I have talked to, who assume that f/22 is the way to get the sharpest possible prints. Well, it just ain’t so. Diffraction limits your resolution at high f-numbers.

Yet another way to say this, is that Johnson’s criterion for COF is to yield an adequate 11 x 14 print. A properly exposed low noise 2 megapixel image file is sufficient for this, using his angular criterion for COF. But that is not why we use cameras with high pixel count.

Note that this is not quite the same as saying that it is equal to a 2 megapixel camera – that would only be true if the 2 megapixel camera was exposed with an f-stop that was within its diffraction limit.

Unlike Johnson’s calculation that depends only on sensor size, here we need to consider the pixel size.

The formula is max f-stop = P x 1.054

Where P = pixel size in microns. Here are a couple examples

Camera Max f/stop Max practical f/stop

Canon 5D f/8.6 f/9

Canon 1Ds f/9.3 f/9

Canon 1Ds Mark II f/7.6 f/8

Nikon D2X f/5.8 f/5.6

Canon 20D f/6.76 f/7.1

Canon G7 f/2.06 f/2

Note that in each case this is the maximum f-stop to get the full resolution of the camera. It is perfectly OK to stop the lens down further than this, but know that when you do, you will be getting less than the full resolution. This may or may not matter – lots of people obsess about resolution pointlessly (pun intended).

So, for example, if you take a Canon EOS 1Ds Mark II and stop it down to f/9, you are going to get greater depth of field, but you will not get the full 16 million pixel resolution – instead you’ll get resolution more like a 5D or 1Ds. That is still plenty good for many purposes. In fact, if you want the greater depth of field, then it may well be worth it. So, I still stop down to f/16 or even f/22 on occasion, but only when I decide that depth of field is more important that resolution – everywhere. When I know that I want to make a very large print, I stay at or below the maximum f-stop for diffraction limited resolution.

In the case of the Canon G7, an excellent 10 megapixel point-n-shoot camera (which I also use), it is diffraction limited at f/2. Yet the minimum aperture on the camera is f/2.8. What this means is that the camera NEVER can deliver its full stated 10 megapixel resolution. Diffraction will limit it to less than this. The 10 megapixel sensor is thus more of a marketing slogan than reality. It suggests that if Canon wants to come out with a G8 camera, then there is no point in going beyond 10 megapixels – they’d better either upgrade the lens, or admit that diffraction limits the resolution to less than what the sensor is producing.

Note that these calculations assume that the lens is sufficient to also achieve diffraction limited performance. That is probably a good assumption for many top quality lenses, particularly macro lenses and prime telephotos from good manufacturers. It is surely not the case for many zoom lenses, or some prime wide angle lenses, particularly near the edge of the frame. Neither Johnson’s calculations, nor mine, factor in lens aberrations – we are both assuming that the lenses are perfect and can achieve diffraction limited resolution. In other words, your actual results may be WORSE than predicted above, and they cannot possibly be any better.

___________________________________________________________

A Response by Charles S. Johnson, Jr.

Nathan Myhrvold raises an interesting point. He is concerned with achieving the maximum resolution from his equipment rather than trying to adjust or maximize the depth of field. That is a reasonable objective; and as he notes, it requires different criteria from the ones I used. I am delighted to see this discussion because it raises awareness of the diffraction problem.

Myhrvold’s analysis suggests that diffraction broadening renders high pixel density sensors less effective, or at least that they will always suffer from diffraction limited resolution. That raises the important questions about optimum pixel size and how small a pixel can be. In my essay I avoided detailed consideration of pixel size and simply assumed that the pixel density was sufficient to describe the Airy broadening function resulting from diffraction. The pixel size question is not easy, but it leads to interesting physics. I like that sort of thing, but I thought that my essay was heavy enough as it was.

Since the question has been raised, I do have a few comments. My analysis of pixel size effects leads to very different results from those reported by Myhrvold. I conclude that the Canon 1Ds, Mark II, with a pixel pitch of 7.2 microns, can use all of its resolution to describe an image at f/22, and that at f/2.8 aliasing would be severe without an anti-aliasing filter. My assumptions are quite different from those made by Myhrvold, and some explanation is required. The basic idea is that more than one pixel is required to describe a diffraction spot according to sampling theory. Therefore, we need to determine what pixel size best describes a diffraction broadened image.

For obvious commercial reasons optical engineers have investigated pixel size effects with sophisticated simulation programs. For example, Brian A. Wandell and co-workers at Stanford University have published extensively on engineering aspects of sensors. Some relevant links are listed below. My analysis is based on the work of Wandell, et al.

Two of the factors that limit pixel size reduction are noise and diffraction blurring. I will ignore noise and will first consider a monochrome sensor. Pixels sample an image at intervals that depend on the pixel pitch, and the Nyquist theorem tells us that there must be at least two samples in a distance equal to the diffraction spot diameter to avoid aliasing, i.e. to correctly describe the image. More frequent samples will not improve the resolution and fewer samples will lead to aliasing. When a Bayer pattern is used as the color filter array, the pixel pitch must be even smaller. To avoid aliasing at all wavelengths we must be sure to account for blue light (400 nm). That is to say, two different blue pixels must sample the image within the diameter of the blue diffraction spot for a total of about four pixels across the spot. With our previous notation, the pixel pitch must be 0.61 x 400 nm x N or less. Therefore, at N = 22 the pixel pitch must be about 5 microns or less and that at N = 2.8 the pixel pitch cannot exceed about 0.7 micron. This is a serious limitation because aliasing the color filter array can lead to color artifacts even for monochrome images!

So how can digital cameras and especially DSLR cameras with large pixels work with high quality lenses and small diffraction broadening. This is only possible through the use of anti-aliasing filters that blur the image! Such filters are, of course, a standard feature of modern digital cameras; and cameras with very small pixels need less blurring to avoid aliasing. The conclusion is that small sensors with high pixel density are not necessarily overkill at least as far as resolution is concerned.

Throughout, I have assumed diffraction limited lenses. Most real lenses do not reach the diffraction-limit. Blurring from lens aberrations may depend strongly on wavelength of the light and will certainly depend on the distance from the center of the image. I expect that Canon 30D users can safely exceed N = 7 with most lenses and that the improvement or degradation of resolution will depend more on lens characteristics rather than on diffraction broadening and pixel size.

P. B. Catrysse and B. A. Waddell (2005)

Roadmap for CMOS image sensors: Moore meets Planck and Sommerfeld

Chen, Catrysse, El Gamal, and Waddell (2000)

How Small Should Pixel Size Be?

___________________________________________________________

Further Comments – By Nathan Myhrvold

Unfortunately Johnson is mistaken in his interpretation of how diffraction limits pixel resolution. The diffraction limit broadens the Airy disk, and this limits resolution. The Nyquist theorm does not, and cannot save you from this.

The effect of the Airy disk (what he calls diffraction broadening) is very similar to doing a Gaussian blur in Photoshop. Try this experiment – do a Gaussian blur of radius 1.5 pixels – does it blur the image? Well of course it does, and the Airy disk effect is similar (but not exactly the same). It does not destroy the image, and you could probably make a small print after the blur, but at a very large size it would be problematic. We can recover some of this by subsequent sharpening – indeed that is one of the reasons that we need to apply a sharpening algorithm (the other is the anti-aliasing filter). The articles by Brian Wadell et al from Stanford referenced do not contradict this at all – indeed they are fully consistent with my point of view. Indeed pages 6 & 7 and figure 4 of the first articlehttp://white.stanford.edu/~brian/papers/ise/CMOSRoadmap-2005-SPIE.pdfmake the point, albeit indirectly.

When Johnson says “I conclude that the Canon 1Ds, Mark II, with a pixel pitch of 7.2 microns, can use all of its resolution to describe an image at f/22,”, well, it just isn’t so. Not even close. Assuming a diffraction limited lens (i.e. no aberrations or lens defects) the fact is that an image at f/22 will look very similar to an image taken at f/8 which has subsequently had a Gaussian blur of around 1.5 pixel radius. With sufficient sharpening work you can get some of this back, but something very real has been lost from the image. Some raw conversion software (such as DXO) will try to deal with some of this, since they know the f-number but ultimately it is not reversible. If you do experiments it is pretty obvious (see below).

I was going to launch into a full treatment of this topic in rebuttal to spell out what happens, but then I discovered the following site that has an excellent description, complete with exampleshttp://www.cambridgeincolour.com/tutorials/diffraction-photography.htm

This includes a very cool applet built into it in the section called VISUAL EXAMPLE: APERTURE VS. PIXEL SIZE. I recommend that you run your mouse over various apertures in the Aperture column, and it will show you the Airy disk for each aperture. It will stay on whatever the last one you left it on. Then run your mouse over the cameras and it will show you the pixel grid. This shows utterly and clearly what the effect of diffraction is. Play with it and it will become clear what happens.

TheCambridge in Colorpage referenced above also has actual photographs taken with a Canon 20D at f/8, f/11 and f/22. Mouse over the various f/ numbers in the caption and you will see the difference. If you don’t believe the calculations, just look at those examples. It shows what happens to high resolution detail (some corduroy fabric) when you take the photo at various f-numbers.

Or, take your own photos – use a very high quality lens (such as the prime macro lenses from Canon or Nikon) and focus on something with a lot of high resolution detail, ideally in a single plane of focus (so depth of field is not an issue). Take the shot at various f/ numbers and compare for yourself if f/8 and f/22 both have the same resolution detail. They just can’t by the basic physics of light.

Note that theCambridge in Colorsite uses a somewhat different criterion for diffraction limitation than I calculated – it says diffraction limited when the Airy disk is twice the pixel size. I used twice the size for red and blue and 1.4X for green (but then rounded up). Given that in all modern cameras you select apertures by half stops, or one-third stops, there is no practical difference. Also, the difference at the peak is small, so the difference between the peak resolution and one stop higher f-number is not great. But by the time you go 3 f-stops higher you really do notice it.

As both Johnson and I have stated, all of this assumes perfect diffraction limited lenses. In a real lens – particularly a zoom lens, or a fast wide angle lens (or worst, a fast wide angle zoom) there are other aberrations that get in the way besides diffraction. This often is worse at the edges of the frame than in the center. So in a real situation you may be much worse than the diffraction limit. But that does not mean you can ignore diffraction – it just means that you may have additional optical problems that give you resolution that is even worse than predicted by the above. The Airy disk and diffraction limitation still occurs. It may be however that a particular lens aberrations are so much better at one f/stop than another that it is worth.

Finally, I will point out that if you are really after high resolution, you can get around the issues above by using multiple images, and a software technique called super-resolution. Here is a software tool that does this, converting a sequence of images into one higher resolution imagehttp://www.photoacute.com/studio/index.html.

Nathan

Read this story and all the best stories on The Luminous Landscape

The author has made this story available to Luminous Landscape members only. Upgrade to get instant access to this story and other benefits available only to members.

Why choose us?

Luminous-Landscape is a membership site. Our website contains over 5300 articles on almost every topic, camera, lens and printer you can imagine. Our membership model is simple, just $2 a month ($24.00 USD a year). This $24 gains you access to a wealth of information including all our past and future video tutorials on such topics as Lightroom, Capture One, Printing, file management and dozens of interviews and travel videos.

- New Articles every few days

- All original content found nowhere else on the web

- No Pop Up Google Sense ads – Our advertisers are photo related

- Download/stream video to any device

- NEW videos monthly

- Top well-known photographer contributors

- Posts from industry leaders

- Speciality Photography Workshops

- Mobile device scalable

- Exclusive video interviews

- Special vendor offers for members

- Hands On Product reviews

- FREE – User Forum. One of the most read user forums on the internet

- Access to our community Buy and Sell pages; for members only.